Pesky Causes of Respose Time Spikes

Pesky Causes of Respose Time Spikes

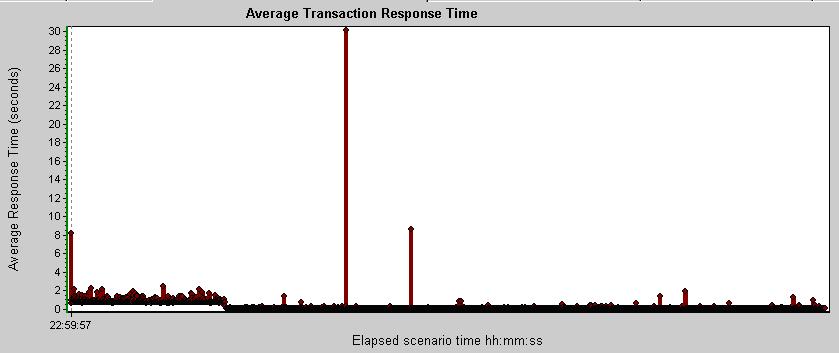

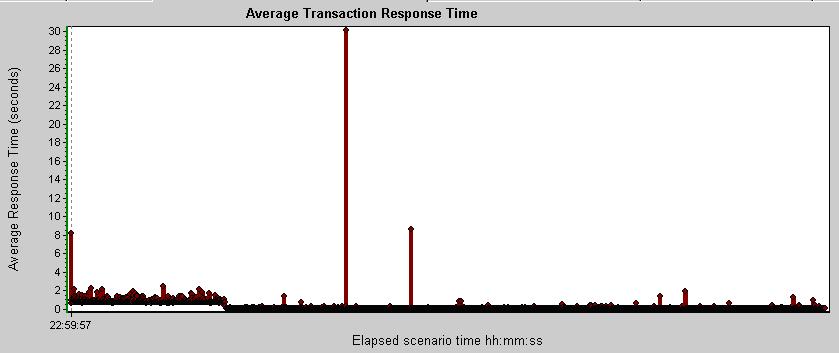

Sudden spikes in response time can

occur even on systems with plenty of capacity.

Sudden spikes in response time can

occur even on systems with plenty of capacity.

The graph shown displays response times at a low level of

granularity (one second per tick).

which is usually not available in production.

Since many users

share a common network, databases, devices, and other resources,

inevitably, like one of many eventually

picking all the numbers of a lottery,

at some point many will request the same resource at the same time.

- Do databases create and release locks?

- Is the system is programmed with inappropriate queuing and locking mechanisms?

- Are duplicate keys created when collisions occur?

Many conduct contention testing to ensure that these questions are

answered and that contention errors are handled properly.

If left unaddressed, spikes in response time

can create a cascade of trouble and

have a chance of damaging customer goodwill

(albeit among the unlucky few).

Some managers say that because they occur rarely,

there is often not enough "payback" in the effort

to find and fix contention issues.

Using terms from Statistical Process Control,

contention testing identifies what really happens

when collisions occur.

Contention testing exposes the effects of both

common causes of spikes always

inherent in the system (such as due to poor programming) as well as

Special causes of spikes

occasionally imposed on the system (such as many users at once).

Difficult in Production

Difficult in Production

Once in production, the root cause of spikes and errors

are notoriously difficult to pin-point.

Individual spikes may not be exposed by some

production application performance monitoring (APM) systems which

focus on averages and ignore the worst responses

as "outlyers".

Finding what resources transactions are contending against

other transactions can be identified only if very precise

(sub-second level) tracing is maintained for

every activity at the same time,

a luxury almost no one can afford in production.

So contention issues need to be found, during development,

before they are allowed to lurk in production.

That way, even when contentions are not fixed,

troubleshooters and developers of production systems

are thankful for knowing about them

and for a way to recognize them

before they are encountered because

when contention issues do eventually occur,

people can respond to them efficiently

and without the drama and stress of production crises.

That's Not Really Contention Testing, Dear

That's Not Really Contention Testing, Dear

One has a better chance of winning the lottery than duplicating

contention issues using conventional stress, longevity, and

"day in the life" test scenarios.

They are unlikely to show transactions

contending with each other for resources

unless they are executed frequently enough to land on the

same nanosecond (10th of a second) together.

Let's do the math: In a one-hour run of 60 minutes,

there are at least 36,000 possible slots of

execution (60 minutes * 60 seconds/minute * 10 nanoseconds/second).

The chance of two transactions occuring at the same time is therefore

36,0002/2 or 1 in 648,000,000.

Doing "longevity" runs of many hours

and running a stress test (with heavier loads) increase the

likelihood of collisions occuring.

But they are like buying five lottery tickets instead one —

the chances are not increased significantly to ensure winning

because there are so many possible values.

But then one has to determine whether a spike is caused by

the load or by contention from the inherent architecture

of the system. Thus, contention should be

done during development

work and not during pre-production (when it's usually too

expensive to make architectural changes).

With contention testing, the number of vusers and rate of requests

are not as important as the synchronization of requests.

In order to expose contention issues which cause spikes in response time,

different vusers need to execute different transactions

at exactly the same time (simultaneously, not just concurrently),

preferrably when nothing else is running on the same system.

Contention Testing Features and Scenarios Under-used

Contention Testing Features and Scenarios Under-used

Luckily,

LoadRunner provides a mechanism to identify transaction contention through synchornization.

HP's highest level of LoadRunner Certification Exam

asks many questions on use of LoadRunner's synchronization.

Yet, few performance test professionals use the feature.

This may be due to mis-understandings fostered by this mis-print in

the HP Virtual User Generator User Guide [page 628 in v9.51]:

To emulate heavy user load on your client/server system,

you synchronize Vusers to perform a task at exactly the same moment...

The reality is that, unlike conventional stress testing,

imposing a "heavy load" is not the goal of contention testing,

but the symptom exposed by contention testing.

Contention testing is another class (type) of performance testing,

different than

stress, longevity, or data volume testing  scenarios.

scenarios.

Because they occur due to chance, contention conditions

CANNOT be practically duplicated by typical

performance stress testing approaches.

Contention Testing using Rendezvous Functions

Contention Testing using Rendezvous Functions

Let's revisit the HP manual with one revision:

To emulate heavy [contentious] user load on your client/server system,

you synchronize Vusers to perform a task at exactly the same moment by creating a

rendezvous point. When a Vuser arrives at the rendezvous point, it is held by

the Controller until all Vusers participating in the rendezvous arrive.

You designate the meeting place by inserting a lr_rendezvous() function into your Vuser script.

I added "[contentious]" because, unlike stress testing which imposes

a heavy load (using many Vusers and/or making requests quickly),

a contention test consumes memory because classes needed to process specific transactions

must consume memory at the same time because the transactions which they process are

requested at the same time.

Also, a contention test will consume more processing time and

more CPU cycles if it needs to do extra work to

swap memory or otherwise block resources

because it really cannot process several different transactions at the same time.

Complications with Rendezvous Functions

Complications with Rendezvous Functions

The complication with using lr_rendezvous() functions is that such

scripts can only be run using the LoadRunner Controller

rather than on the desktop VuGen program.

So one can debug a script with lr_rendezvous() only using batch debugging techniques rather than

interactive pausing of scripts real-time during execution.

Adding rendezvous code can be done during recording or after recording by inserting it into the

generated script. Simply typing in the lr_rendezvous() command does not work because

the guided rendezvous insertion also

adds values in the script's .usr.

Changes to a lr_rendezvous statement should be done in Tree View

rather than Script View. [page 823].

Another difficulty with using lr_rendezvous() functions is that

each vuser must use the same rendezvous meeting value,

yet execute different transactions.

Normally, it would take much time to coordinating and hand-coding triads of:

- specific rendezvous values

- used to sync specific transactions

- executed by specific Vusers.

Sequential Transaction Set Build-Up

Sequential Transaction Set Build-Up

Most transactions are not autonomous.

Most transactions require one or more predecessor transaction which must

complete successfully before their successor can execute.

For example, it may not be possible to invoke a transaction to select a restricted menu item (T03)

unless a login and password transaction (T02) completes authentication

from a page obtained by invoking a particular URL (T01).

In such cases, a second vuser starts a second sequence

of the same set of transactions

at the same time when the first vuser invokes the second transaction

in the sequence (T02).

Each step is controlled by a rendezvous point.

This approach creates successively larger sets

of transactions at each successive rendezvous point.

Rather than starting all users executing various transactions with a quick ramp-up,

this has the advantage of building up on memory requirements such that

wherever point the script fails,

the amount of memory is known from the last good set of transactions.

The trouble with this approach is it takes a lot of work to arrange.

Rendezvous Run Control Values

Rendezvous Run Control Values

Zero-padded numbers are used for rendezvous and transaction names

to ensure proper sorting by software.

The right-most column (named "p1_RecSeq") is a

sequential count of rows.

In the last row, its value is "end".

I have tried to make things easier and quicker by

scripting LoadRunner to be controlled by a parameter data file

containing one line for each triad of values to each run sequence.

I have tried to make things easier and quicker by

scripting LoadRunner to be controlled by a parameter data file

containing one line for each triad of values to each run sequence.

p1_Vuser,p1_Rendezvous_num, p1_TransName,p1_RecSeq

1,,T01,1

1,,T02,2

1,,T03,3

1,R01,T01,4

1,R02,T02,5

1,R03,T03,6

2,R02,T01,7

2,R03,T02,8

3,R03,T01,end

This file enables a LoadRunner script to use a

rendezvous point for each set of

transactions associated with a meeting value.

Thankfully, LoadRunner 9.51 ignores case differences in rendezvous meeting values.

The LoadRunner script is coded such that multiple vusers can use it

without making changes for each vuser scenario. This is possible because

the script compares the control file vuser id against the

Controller's own Vuser IDs, which by default begins from the number 1.

Alternately, the script has been coded to recognize a "myvuserid"

Additional Attribute defined in Runtime Settings so that different values

can be specified for each vuser.

I created an Excel spreadsheet

containing a VBA macro which generates

the CSV file from combinations defined in a worksheet

which specifies a matrix of transactions for each combination of

Vuser and Rendezvous id.

I created an Excel spreadsheet

containing a VBA macro which generates

the CSV file from combinations defined in a worksheet

which specifies a matrix of transactions for each combination of

Vuser and Rendezvous id.

Efficient Factorial Experiment Design

Efficient Factorial Experiment Design

To identify which transactions contend with each other,

one doesn't have to test every possible combination of transactions

(e.g., use a "fully-crossed" experiment design)

which exponentially increases the number

of interactions between transactions (called "factors")

when an additional transaction is added.

Since transactions are executed simultaneously,

an identity matrix of transactions

does not need to display both T2-T1 and T1-T2.

Since they are logically transitive,

only one of the two combinations is necessary.

Smart mathimaticians in the field of "Design of Experiments"

(DOE), such as Dr. Taguchi,

point out that a

"fractional factorial" experiment design

can be used to simplify the number of combinations tested.

If the response time of each treatment

(combination of transaction) is not a continuous value

(such as 3.4), one can use a simplier set of 2 levels of test outcomes

(either "same as stand-alone" or "much faster than stand-alone").

Contention Results Analysis and Verification

Contention Results Analysis and Verification

I created an Excel workbook and VBA macro to create this

Contention Testing Results Matrix to highlight

transaction combinations.

It uses data copied from a LoadRunner Analysis Summary report

of response times. Response times for transactions run independently

(not as part of a rendezvous point) are shown in light-green boxes on the

diagonal where the same transaction is on both axis.

I created an Excel workbook and VBA macro to create this

Contention Testing Results Matrix to highlight

transaction combinations.

It uses data copied from a LoadRunner Analysis Summary report

of response times. Response times for transactions run independently

(not as part of a rendezvous point) are shown in light-green boxes on the

diagonal where the same transaction is on both axis.

Boxes beneath the diagonal contain the difference in average response time

between individual execution times (without rendezvous) versus

times run within a rendezvous.

The dark-green box shown here illustrates that

transaction T01 takes +31 seconds longer when run with T02.

This is calculated by comparing the response time of transaction

"T01" against

transaction "T01_R02" since

(referring to the scenario design)

T01 is run with T02 under rendezvous point R02.

This and errors occuring during contention testing

should be confirmed by repeated invocations of

that transaction set and rendezvous point.

Such an approach enables natural variation in results to be quantified.

An architectural issue is usually evident only when

the Std. Deviation is smaller than the Average

(which reveals that the Average does not happen because of pure chance).

Contention conditions created are also identified in the

Average Response Time report for transactions with a

Maximum response time much higher than its Average or a

Std. (standard) Deviation

many more times than its Average.

Among its other Analysis reports,

LoadRunner generates after each run a Rendezvous report.

When reading this report,

ensure that counts in the "Maximum"

column matches the number of transactions designed to be in

each Rendezvous point.

Numbers in the Average and Std. Deviation columns can be ignored

because they are counted at various points during processing.

Among its other Analysis reports,

LoadRunner generates after each run a Rendezvous report.

When reading this report,

ensure that counts in the "Maximum"

column matches the number of transactions designed to be in

each Rendezvous point.

Numbers in the Average and Std. Deviation columns can be ignored

because they are counted at various points during processing.

Contention Testing with LoadRunner Rendezvous

Contention Testing with LoadRunner Rendezvous

![]()

![]() Related topics:

Related topics:

I have tried to make things easier and quicker by

scripting LoadRunner to be controlled by a parameter data file

containing one line for each triad of values to each run sequence.

I have tried to make things easier and quicker by

scripting LoadRunner to be controlled by a parameter data file

containing one line for each triad of values to each run sequence.

Among its other Analysis reports,

LoadRunner generates after each run a Rendezvous report.

When reading this report,

ensure that counts in the "Maximum"

column matches the number of transactions designed to be in

each Rendezvous point.

Numbers in the Average and Std. Deviation columns can be ignored

because they are counted at various points during processing.

Among its other Analysis reports,

LoadRunner generates after each run a Rendezvous report.

When reading this report,

ensure that counts in the "Maximum"

column matches the number of transactions designed to be in

each Rendezvous point.

Numbers in the Average and Std. Deviation columns can be ignored

because they are counted at various points during processing.